Who owns your AI Orchestration?

Who owns your AI orchestration—and what does that mean for your business?

Fresh from AgentCon NYC, and after listening to an excellent presentation by Andrew Connell on Microsoft Copilot and agent development, I found myself thinking less about how we build AI solutions—and more about who controls them.

Specifically: who owns the orchestration layer of your AI architecture, and what are you committing to when you choose one?

It’s a deceptively simple question. But as organizations move from basic AI usage to agentic systems that run real business processes, it quickly becomes one of the most important architectural decisions you’ll make.

Why This Question Matters Now

At AgentCon, Andrew Connell walked through the options available to developers building agents in the Microsoft Cloud, including Copilot Studio and the Agents Toolkit. One point stood out: Choosing your orchestrator is an early decision—and one many developers aren’t even aware they’re making. That choice shapes how your agents behave, how they fail, how they’re debugged, and how easily you can evolve your AI strategy over time. If you only realize this after building production agents, you may already be locked into the wrong place in the ecosystem.

What Is AI Orchestration?

AI orchestration is the layer that coordinates, manages, and controls how multiple AI components work together to deliver an end‑to‑end outcome.

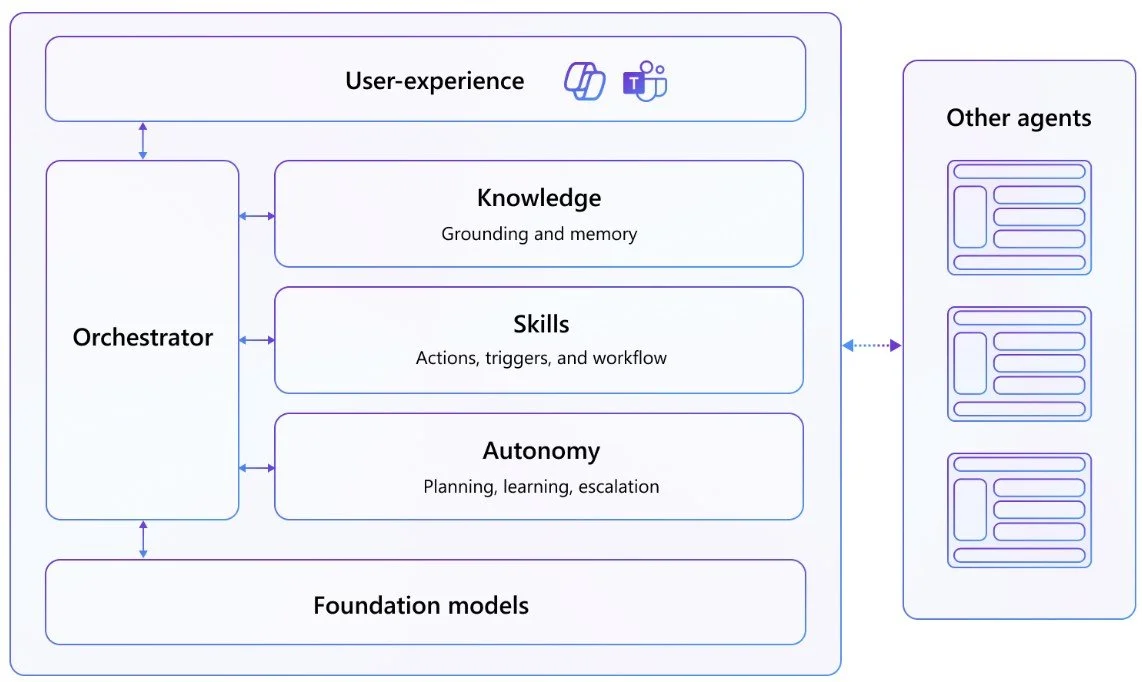

Microsoft - “Anatomy of an Agent” showing the place of the Orchestrator

Think of it as the conductor of the AI system. It doesn’t perform the task itself—but it decides what runs, when it runs, with what data, and what happens next.

A typical orchestration layer handles:

Workflow control

Sequencing steps such as retrieval → reasoning → tool use → response

Handling branching logic, retries, fallbacks, and errorsModel coordination

Choosing which model to call (large, small, domain-specific)

Routing based on intent, sensitivity, latency, or costTool and action invocation

Calling APIs, databases, Power Automate, ERP, CRM, or ticketing systems

Passing structured inputs and interpreting structured outputsContext and memory management

Injecting documents, conversation history, and user context

Managing short-term and long-term memoryPolicy, security, and governance

Enforcing identity, data access, and compliance rules

Logging, auditing, and prompt safeguardsObservability and optimization

Tracking usage, latency, cost, and quality

Supporting prompt versioning and experimentation

This is not a minor technical detail—it’s the control plane for AI behavior.

Orchestration Shapes the User Experience

Orchestration is foundational to conversational AI experiences, whether you’re using Copilot, ChatGPT, Claude, or Gemini. If two chat systems use the same language model, with the same knowledge and tools, the difference between a great experience and a frustrating one is almost always orchestration:

What context was injected?

Which tools were invoked?

How were errors handled?

Did the system decided to ask a clarifying question—or act autonomously?

This is why orchestration is a major investment area for leading AI platforms. It’s also why it’s a source of competitive differentiation.

When Orchestration Ownership Becomes Critical

Early in AI adoption, ownership of orchestration may not matter much.

If you’re using a general-purpose chat experience:

You choose a tool with the right UX, price, and compliance profile

If it stops meeting your needs, you can switch later

This is a “two-way door” decision

At this stage, the orchestrator is largely someone else’s concern.

That changes quickly once you move into custom agent development.

When agents begin executing workflows, invoking systems of record, and producing business outcomes, orchestration becomes central to:

Reliability

Explainability

Debugging

Governance

At that point, not knowing how your orchestrator works isn’t just inconvenient—it’s a blocker.

The Hidden Risk: Orchestration Lock‑In

Building agents exposes the tension between deterministic expectations and non‑deterministic model behavior. LLMs are inherently non-deterministic. Orchestration is what introduces structure:

What prompt was sent

What context and tools were included

What rules were applied

If your agent behaves unexpectedly, understanding the orchestrator is the difference between:

Systematic troubleshooting and improvement

Blind trial‑and‑error with no visibility

This is where third‑party AI platforms can become dangerous. Many make it incredibly easy to build agents, workflows, and integrations. But in doing so, they also lock you into:

Their orchestration logic

Their abstractions

Their runtime costs

History is full of “low‑code / no‑code” platforms that promised speed, only to deliver long‑term technical debt and expensive exits. With AI platforms charging hundreds of dollars per user per month, the cost of orchestration lock‑in can be significant—especially if it underpins core business processes.

Orchestration Is a Point of Differentiation

I started Placid Works with the belief that AI shouldn’t drive organizations into a race to the middle. If orchestration is the conductor of AI, why would you want it to be generic?

If you don’t own orchestration:

Your behavior converges with every other customer on the same platform

Differentiation collapses into configuration

Strategic control shifts away from your organization

At the extreme, you might reasonably ask: do you truly own your AI-enabled business processes—or are you renting them?

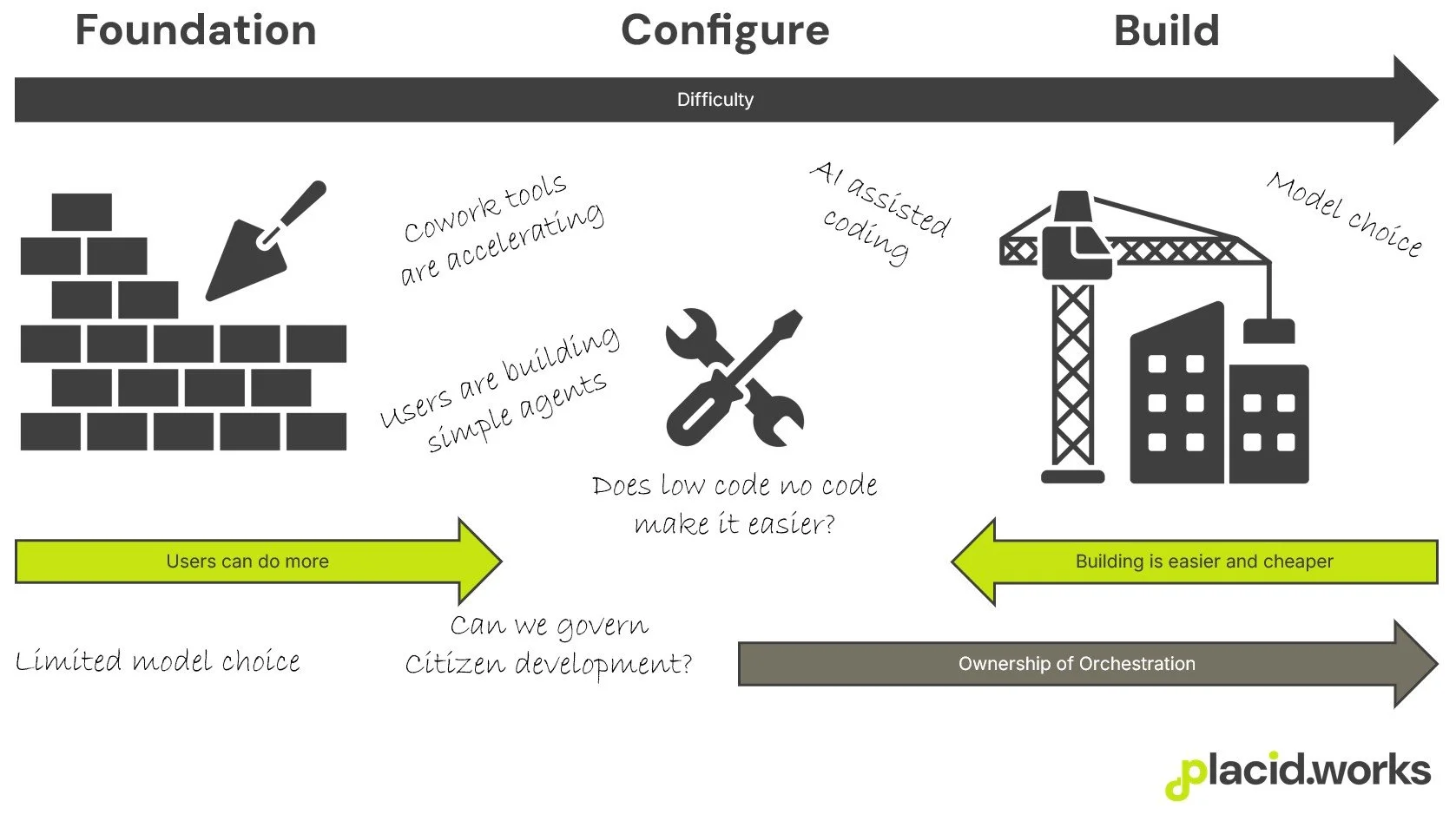

Build vs Configure: Where the Value Is Shifting

As coding becomes easier and AI assistants handle more of the mechanical work, the cost of building orchestration drops—while the value of owning it increases.

Using the Three Degrees of AI Adoption as a lens:

Foundational AI capabilities are becoming commoditized

Building AI is becoming more accessible

The value of pure configuration is being squeezed

Owning orchestration is where these trends intersect. Yes, you’ll need developers. But relative to the strategic control, flexibility, and differentiation gained, that investment may be small compared to the alternative.

Conclusion: A Clear Recommendation

Orchestration is a critical architectural layer—whether you see it or not.

You should:

Understand which orchestrator you’re committing to

Know when that commitment becomes hard to reverse

Treat orchestration ownership as a strategic decision, not an implementation detail

If differentiation is your goal, consider when it makes sense to build AI, rather than simply configure it. Because in the long run, owning your AI orchestration may be the difference between using AI—and being shaped by it.